Beyond Copilot, Cursor and Claude Code: The Unbundled Coding AI Tools Stack

Mix and match IDEs, models and providers for a DIY setup

The AI coding landscape has shifted. We went from "just pay $20 for GitHub Copilot" to a completely unbundled stack where you mix and match your Provider, Model, and Tool.

It’s messy, but if you get the setup right, you get better performance for a fraction of the cost.

Here is how I break down the current state of AI coding going into 2026, and how to navigate the choices between mainstream giants (Cursor, Claude) and the new wave of open models (Minimax M2, Qwen3, Kimi K2).

NOTE: Parts of this article are written using Gemini 3 based on some of my rough notes on this topic.

The Breakdown: The 3 Layers

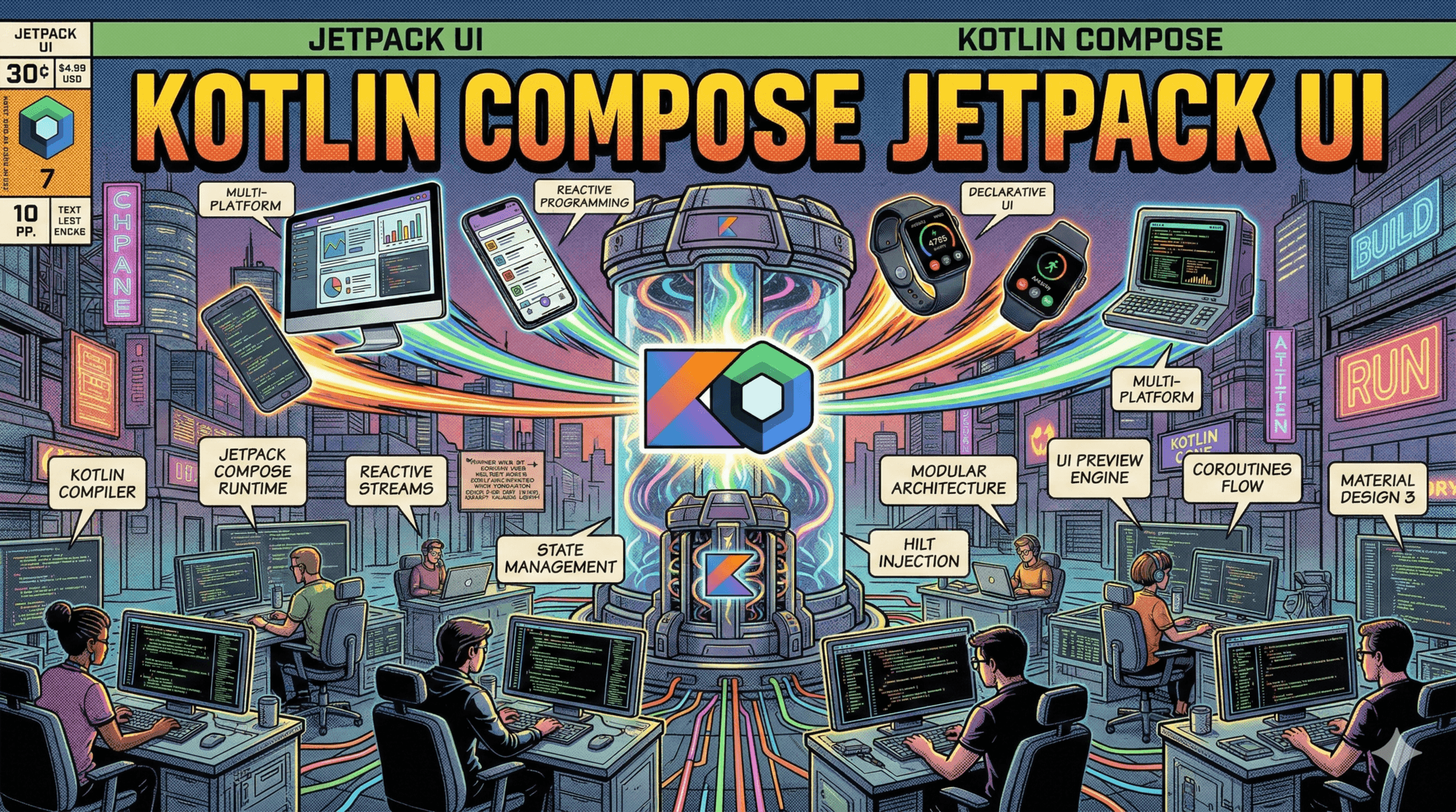

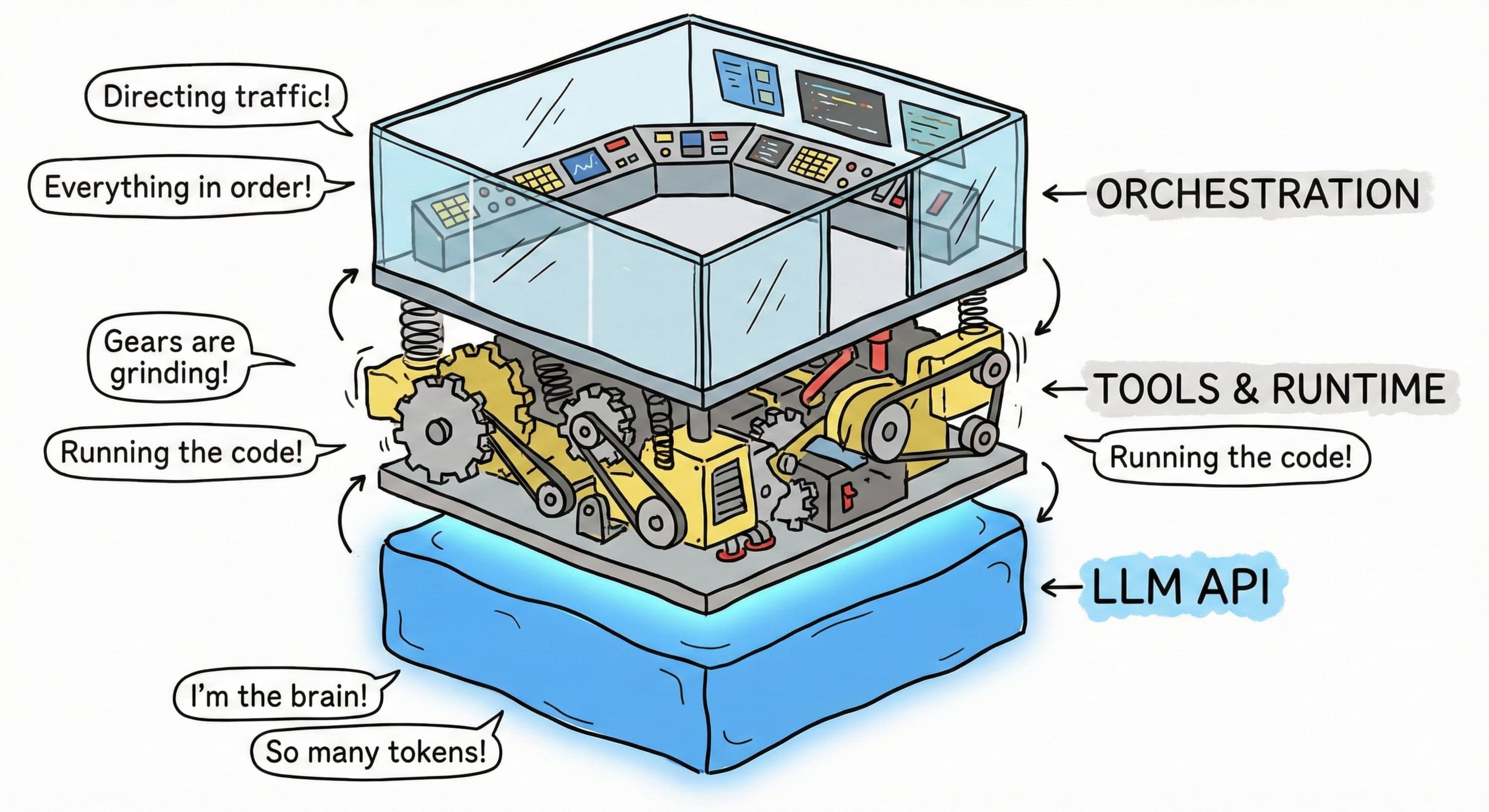

Just like we separate our frontend from our backend, the AI stack is now decoupled:

The Provider: Who runs the GPU? (AWS, Cerebras, your own Mac Studio).

The Model: The brain. (GPT-5.1, Claude Opus, Qwen3).

The Tool: Where you type. (IDE, CLI, or a background agent).

Not only are there multiple mix-and-match combinations possible, I have even been using different setups during different sessions/stages working on the same project too.

Let’s dive into each.

Layer 1: The Provider

This is purely about who runs the model. You have six options, ranging from "easy but pricey" to "DIY hardware."

Proprietary Labs: You go straight to the source (OpenAI, Anthropic, Google). Tokens are expensive, which is why we now see "Max" plans with flat fees to cap the bleeding.

The Big Clouds: AWS Bedrock, GCP Vertex, Azure. This is mostly for enterprises that want the billing on their existing cloud contract. It’s safe, but rarely the fastest or cheapest. But that said, apart from Google’s models only available on their TPUs, other SOTA models are available via the big 3 clouds mostly.

The Chinese Labs: Alibaba (Qwen), DeepSeek, Moonshot serve their models directly too. They are fairly cheap and performant, but you have to deal with the censorship. Asking about Tiananmen Square might flag you, but for React components, they are beasts. Creating new account on Alibaba Cloud is not exactly pleasant UX either.

Infra-as-a-Service (Resellers): Providers like DeepInfra, Parasail, and Novitas rent massive GPU clusters and resell access by the token. They are significantly cheaper than the labs, but there is a catch: they often aggressively quantize models (lowering precision) to save VRAM, so you might lose some "smartness" on edge cases. There are now some

exactomodels to counter this problem.Dedicated LLM Chips: This is where the speed is. Cerebras and Groq don't use standard GPUs; they use wafer-scale engines or LPUs.

Real numbers: Cerebras is clocking ~3,000 tokens per second on Llama 3.1 70B.1 That is not reading speed; that is "blink and it's done" speed.

I’d highly recommend trying out gpt-oss-120b on groq/cerebras and seeing the speed. Once code starts appearing immediately after you hit enter, it can change your mind about the importance of tok/s numbers.

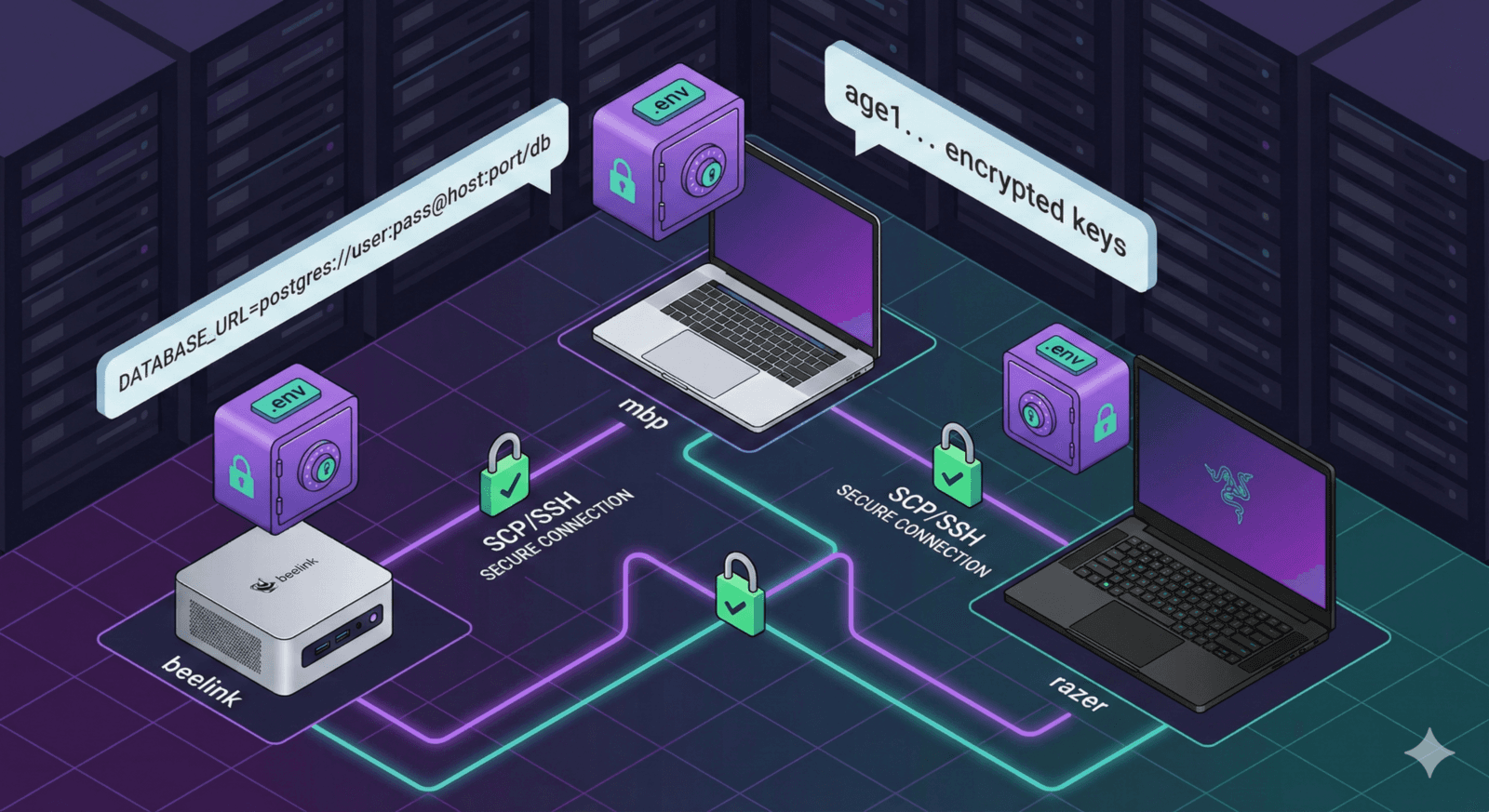

Self-Hosting (Local): Hardware has finally caught up.

Apple: A Mac Studio with an M2/M4 Ultra chip and 128GB+ RAM.

AMD: The new Strix Halo (Ryzen AI Max+ 395) APUs support up to 128GB of LPDDR5X RAM.

The Result: You can run gpt-oss-120b or GLM-4.5-Air locally with zero API costs and total privacy.

Layer 2: The Model

In the unbundled stack, the "Model" is the fungible component. Developers no longer ask "Is Copilot good?" but rather "Which model is best for refactoring legacy C++ vs. writing new React components?" The market has segmented into three distinct categories: The Classic Trio (High Intelligence), Chinese Open Models (High Value), and Fast/Vibe Models (High Velocity).

The Classic Trio: The Gold Medals

These models represent the current state-of-the-art (SOTA) for high-complexity tasks. They are generally too expensive for autocomplete but essential for architectural planning, complex debugging, and tasks requiring deep "System 2" reasoning.

- GPT-5.1 Codex Max (OpenAI):

This is the gold standard for agentic coding. It is built for long-running, detailed work.

Capability: It shows a remarkable aptitude for sustaining long-horizon coding tasks. Benchmarks place it at the top of SWE-Bench Verified with a score of 77.9% (for "xhigh" thinking level)

Mechanism: Its key innovation is compaction, allowing it to work over millions of tokens by summarizing past interactions. This unlocks "project-scale refactors" where the model must understand the implications of a change across hundreds of files

Use Case: Deep debugging sessions, multi-hour agent loops, and massive refactors.

- Claude Opus 4.5 (Anthropic):

Anthropic's flagship model excels in nuance and "human-like" reasoning.

Capability: It is described as "dynamic rather than overthinking," capable of interpreting ambiguous requirements and reasoning over architectural tradeoffs.8 It scores 80.9% on SWE-Bench, slightly edging out competitors in certain evaluations

Mechanism: Opus 4.5 preserves "thinking blocks" from previous turns in the model context, allowing it to maintain a coherent chain of thought throughout a conversation

Use Case: Architectural planning, complex system design, and tasks where "understanding the intent" is more important than raw speed.

- Gemini 3 Pro (Google):

The multimodal beast.

Capability: With a 1 Million token context window as standard, Gemini 3 Pro is the premier model for "whole repo" understanding. It outperforms competitors in multimodal tasks—reading screenshots of UIs alongside code—and boasts a GPQA Diamond score of 91.9%, indicating PhD-level scientific reasoning

Mechanism: It uses a "thinking process" that allows it to reason deeply before generating a response, similar to Chain-of-Thought prompting but baked into the model architecture

Use Case: Large-scale codebase understanding, multimodal UI debugging, and scientific/mathematical coding tasks.

The Chinese Open Models: The Commoditizers

The gap between proprietary SOTA and open weights has collapsed. Models from Chinese labs offer 90-95% of the performance of the "Classic Trio" at nearly zero marginal cost for local users or significantly reduced API costs.

Qwen3 Coder (Alibaba):

Qwen3 Coder is an open-source marvel supporting 119 languages.

Innovation: It features a "Hybrid Thinking Mode" that intelligently switches between deep reasoning (slow) and fast response based on the complexity of the query.34 This adaptability makes it an incredibly versatile tool for polyglot developers.

Performance: It rivals Claude Sonnet 4 in coding benchmarks, making it a viable free alternative for local hosting

DeepSeek V3 / R1:

Known for its "Deep Thinking" capabilities, DeepSeek R1 forces the model to verify its own logic steps before outputting code.

Innovation: The R1 model utilizes reinforcement learning strategies to "self-correct" during the generation process. This significantly reduces logic errors in complex algorithms, making it widely considered the best price-to-performance model on the market

Economics: Its ultra-low cost (due to efficient MoE architecture) allows developers to use it for "brute force" tasks—generating 100 variations of a function to find the best one—without breaking the bank.

Moonshot Kimi K2:

Kimi K2 is a "Thinking Agent" model.

Innovation: It is meticulously optimized for agentic capabilities, specifically tool use. Unlike models that struggle with multi-step instructions, Kimi K2 is trained to execute 200-300 sequential tool calls (search, file read, file write) without losing the thread of the task

Performance: It scores 71.3% on SWE-Bench Verified, placing it in the upper echelon of coding models

The Velocity Models: "Fast" & "Vibe"

A new category of model has emerged focused purely on latency. These are used for "Vibe Coding"—where the developer iterates rapidly in natural language, expecting near-instant visual feedback.

Grok Code Fast (xAI):

Grok Code Fast is optimized for sheer speed in agentic workflows.

Philosophy: xAI recognized that agentic loops (Reasoning -> Tool Call -> Observation -> Reasoning) are often bottlenecked by the model's latency. Grok Code Fast is designed to be "blazing fast," allowing it to call dozens of tools before the user has even finished reading the first paragraph of the thinking trace

Specs: It supports a 256k context window and is particularly adept at TypeScript, Python, and Rust

GLM-4.5 Air (Zhipu AI):

A lightweight powerhouse.

Efficiency: GLM-4.5 Air requires only 32-64GB of VRAM to run locally. This makes it the most powerful model that can comfortably fit on a high-end consumer workstation (like a dual 3090 setup or a Mac Studio) while maintaining strong instruction following capabilities.39

Adoption: It has become a favorite for local "vibe coding" setups where users want privacy without sacrificing too much intelligence. Even Windsurf and Cursor have built their “own” models by basically fine-tuning this.

gpt-oss-120b:

The gpt-oss-120b represents the community's effort to run massive models on dedicated silicon like the Strix Halo Running a 120B model locally at acceptable speeds transforms the development experience, offering near-SOTA capabilities without the latency or cost of the cloud. But if run on a cloud like Cerebras or Groq, it can also give almost 1000+ tokens/second of speedy outputs.

Layer 3: The Coding Interface

The tool layer is where the developer lives. In the bundled era, this was VS Code with a proprietary plugin. In the unbundled era, the tool is a flexible chassis that accepts any provider and any model. The market has segmented into Agentic IDEs, Open Source tools, CLIs, and Vibe Coding Platforms.

The Agentic IDEs: Cursor, Windsurf, Copilot

These are forks or heavily modified versions of VS Code designed to integrate AI natively into the editor's core loop, rather than bolting it on as a sidebar.

Cursor:

Cursor is the pioneer of the unbundled IDE space.

Features: It allows users to "tab" through code generation and features "Composer," a multi-file editing agent. Crucially, Cursor allows users to input their own API keys (BYOK) for models like Claude Opus or GPT-4o, giving them control over costs and capabilities

Philosophy: Cursor treats the AI as a pair programmer that "lives" in the editor, capable of seeing the cursor position, diffs, and terminal output.

Windsurf:

Windsurf focuses on "Flow" states.

- Features: It utilizes deep context awareness of the codebase ("Cascade") to predict the developer's next move. By indexing the codebase locally, it allows for "context-aware" completions that understand the broader architecture of the project.

GitHub Copilot (Evolution):

Even the progenitor of the bundled model has had to adapt. Copilot has evolved to support multiple models (Anthropic, Gemini, OpenAI) within its interface, acknowledging the unbundling trend. However, it still largely operates as a "subscription" service rather than a pure BYOK platform.

The Open Source & "Bring Your Own Key" (BYOK) Tools

For developers who reject vendor lock-in or telemetry, a new class of open-source editors and extensions has risen.

Roo Code (formerly Cline):

Roo Code is a VS Code extension that transforms the editor into an autonomous agent.

Features: It explicitly supports OpenRouter, Anthropic, DeepSeek, and Gemini APIs. It features "Modes" (Code, Architect, Ask, Debug) to tailor the AI's behavior and system prompts to the specific task

Control: Roo Code gives the developer absolute control. You can approve every tool call, set budget limits, and switch providers on the fly. It is the tool of choice for the "sovereign developer."

Kilo Code:

Kilo Code offers a transparent "pay for what you use" model.

- Features: It integrates with local models (via Ollama) or cloud APIs. It focuses on "Agentic Engineering," providing a team of AI agents that work together to build software

The CLI Renaissance

A surprising trend in 2024-25 is the return to the Command Line Interface (CLI). As agents become more capable, the graphical overhead of an IDE becomes unnecessary for many tasks.

Claude Code:

Anthropic's CLI tool allows developers to manage complex refactors directly from the terminal.

- Workflow: Users can issue natural language commands like "refactor the auth module to use JWTs," and the CLI manages the file reads, edits, and git commits autonomously.46 It creates a "headless" coding experience where the human acts as the manager.

Gemini CLI & OpenCode:

Google's Gemini CLI brings the power of Gemini 3 Pro/Flash to the terminal OpenCode provides an open-source, vendor-neutral alternative, popular among Vim/Neovim users who prefer lightweight, keyboard-driven workflows. These tools appeal to "vim lovers" and power users who want to script their AI interactions.

Vibe Coding Platforms

"Vibe Coding" refers to a development style where the user provides natural language intent ("Make it pop," "Add a dark mode toggle"), and the AI handles the implementation details entirely. The user validates the "vibe" (the outcome) rather than the code.

Lovable is a platform that generates full-stack web apps from text.

- Capabilities: It integrates with backends like Supabase and handles deployment. It effectively replaces the junior dev + DevOps loop for prototypes.48 Users can describe a "CRM for a dog walking business," and Lovable spins up the database, authentication, and frontend UI in minutes.

Bolt is a browser-based full-stack builder.

- Capabilities: It creates a containerized environment where the AI can write and execute code, allowing for instant preview and iteration.49 It is the ultimate "low-floor" tool for Vibe Coding, allowing anyone with an idea to build a functional app without setting up a local dev environment.

Jules (Google), Copilot, Cursor Agents:

Jules and the Background Agents by existing IDE heavyweights represents the "asynchronous" future of vibe coding.

- Workflow: Unlike an IDE assistant, these works on GitHub PRs. You assign it a task ("Upgrade all dependencies and fix breaking changes"), and it creates a Pull Request hours later.51 It is "fire and forget" software development, where the AI acts as a remote contractor.

The Fungible Future

The "Copilot Era" of 2023-2024 was defined by convenience and bundling. The "Unbundled Era" of 2026 is defined by control, specialization, and arbitrage.

The three layers of the stack—Provider, Model, and Tool—have become fully fungible. A developer today might write code in Cursor (Tool), routed via DeepInfra (Provider) to DeepSeek V3 (Model) for drafting, while using Claude Code (Tool) with Opus 4.5 (Model) for architectural review.

This unbundling has three profound implications:

Price Deflation: The competition at the Provider and Model layers (driven by Chinese labs and open weights) ensures that the cost of intelligence trends toward zero for standard tasks.

Tool Innovation: Freed from the burden of training models, tool builders (Roo, Cursor, Bolt) are innovating rapidly on UX, creating new paradigms like "Vibe Coding" where the interface is the product, not the model.

Sovereignty: The ability to self-host or choose vendor-neutral protocols (MCP) protects developers from platform capture. The stack is no longer a monolith; it is a set of Lego blocks.

As we look toward late 2026, the distinction between "writing code" and "managing agents" will continue to blur. The unbundled stack provides the necessary infrastructure for this transition, allowing developers to assemble the exact cognitive supply chain required to build the future.